The interesting thing about having worked with VMware for so long and now for Tintri is that you see some of the same issues in different environments. That is what prompts most blog posts about “how to” fix or address things, and that is no different for me. In the last couple weeks I have been involved in a few cases where some of the basic vSphere principles as well as some of the long-standing NFS best practices just are not followed. In my opinion as things have gotten “easier” to install and manage, we tend to forget a few of the key things to prevent issues in the future of the architecture. This is my short attempt to address some of that. A lot of what I am going to cover is in the Tintri Best Practices Guide. I will pull out some key points from there as I go. I hate to say it but sometimes we need to get back to basics.

If you have not read the guide but want a primer on the physical networking aspect, you can refer to this blog post that covers that specifically before we get into some of the vSphere specifics. What I want to address is some of the core basics that people seem to forget.

Preparing The vSphere Hosts

There are a few things you always want to do on the host side to ensure you are ready to connect a new Tintri VMstore to your environment. For many this may be the first NFS device they are using and may be switching from iSCSI or Fibre Channel. You want to be sure you do the following:

- Install the Tintri VAAI Plugin

- Set the NFS advanced parameters per KB article 2239

- Nfs.MaxVolumes = 256 (set to max for version running)

- Net.TcpipHeapMax = 1536

- Net.TcpipHeapSize = 32

All of the above require host reboots so before you do one you might as well do them all. The settings above are for vSphere 6.0 and later versions the numbers could be higher. The key is that both of these values represent the memory allocation amounts in MB. I can tell you from personal experience, on hosts built as older versions that were then UPGRADED to 6.0 or later, these settings can in fact be lower, in some cases set to 0 and will in fact present host latency that the Tintri UI will actually point out. It’s better to set these before you even start then have to go back later.

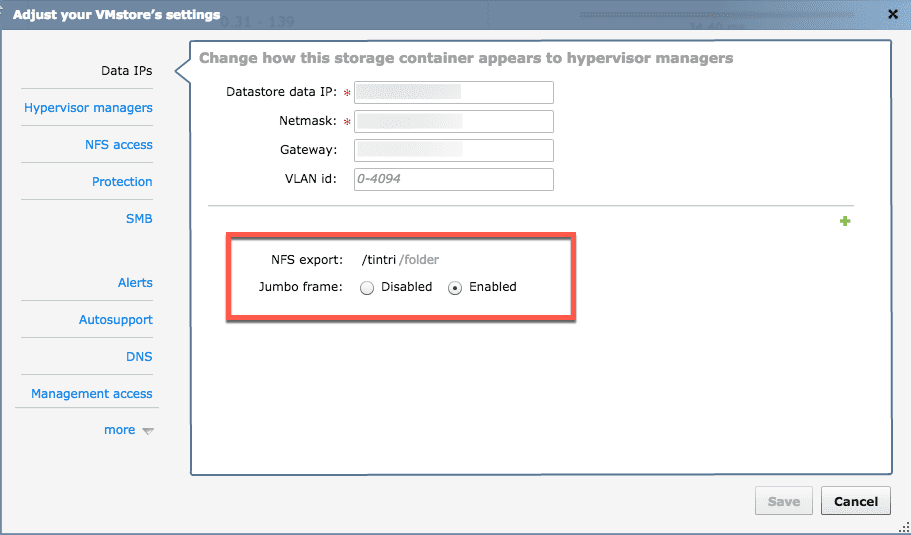

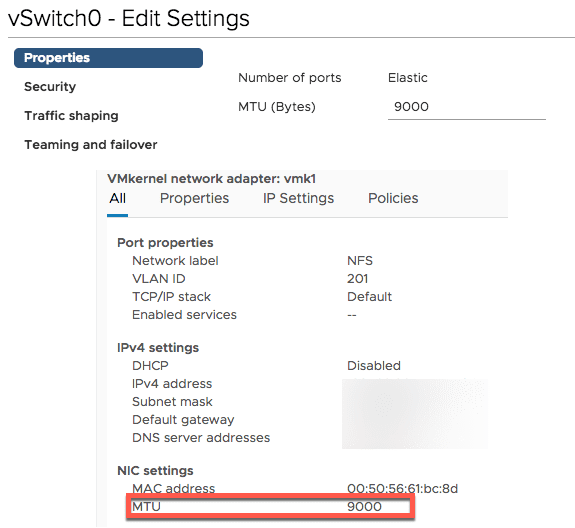

Consider Your MTU Size

Let’s move on to the host networking as that is also where I see a lot of back to basics conversations happening around vSwitches, MTU size, and teaming policies. In general most people working with network storage understand Jumbo Frames and now enable it on the physical switches. You can also enable this in the Tintri device itself and that is recommended.

Assuming you have this set on the physical switches and the VMstore you want to double-check not only the vSwitch level. I understand maybe this is trivial to point out but that is why this is about back to basics.

Consider Port Group Teaming and TCP/IP Stacks

This is typically similar for most people these days that setup hosts. There is some agreement in the community on acceptable number of vSwitches or physical NIC ports. In most cases this is driven by what you have on your hosts to work with. Below is a typical setup based on 4 Physical adapters. The key sometimes comes down to the teaming policy overrides which sometimes I find are not done. This assumes that the physical NIC’s are dual port separate cards. Now this may change for other configurations but the KEY is the teaming override.

#vswitch0

vmnic0,vmnic2 (active/active)

vmk1 (management) default TCP/IP Stack

vmnic0,vmnic2 (active/standby)

vmk2 (vMotion) vMotion TCP/IP Stack

vmnic2,vmnic0 (active/standby)

Virtual Machines

vmnic0,vmnic2 (active/active)

#vswitch1

vmnic1,vmnic3 (active/active)

vmk3 (NFS) default TCP/IP Stack

vmnic1,vmnic3 (active/standby)

vmk3 (NFC) Provisioning TCP/IP Stack

vmnic3,vmnic1 (active/standby)The reason the override is important is on kernel ports if both are set to active/active you will not know which one the kernel binds to on startup. Also assuming there is no LACP configured here, you want to isolate the traffic manually. This is regardless of standard or Distributed Switches. By specifying the different TCP/IP stacks in later versions of vSphere you also define the traffic types. Many people do not even add a kernel port on the “Provisioning” stack and therefore all network File Copy, clone and other operations will usually default to the management port causing some management hiccups at some point. By defining the vMotion and Provisioning stacks you ensure the traffic for those will use those kernel ports.

It also goes without saying each of these should be a separate VLAN and IP subnet. I have seen environments where the VLAN was different but the same IP range was used on the kernel ports and communication was not functioning. It costs nothing to add VLAN tags and create a new IP range so might as well do it. If you have more than 4 ports you can create a third vSwitch just for Virtual Machine traffic, but in some cases you may only be working with two physical links. Either way work with what you have.

Connect To Tintri via NFS

Once all of this is set up THEN you are ready to mount the Tintri storage via NFS on your hosts. Okay, you can probably mount it anytime and it will work, but my feeling is you don’t just want it to work, you want it to work near perfectly. I can tell you that when I get calls about issues, the first places I look are the NFS heap settings and the physical and virtual networking. Most of the time the issue will be found in those places because let’s face it….Tintri just works. It works even better if you stop and take a few minutes to simply review your environment first before going all Leroy Jenkins adding storage and moving Virtual Machines Around.

Chris Colotti's Blog Thoughts and Theories About…

Chris Colotti's Blog Thoughts and Theories About…