In part one of this series, we examine the minor issue of the clone process being managed and loaded onto a single host in a cluster under vCloud Director. In part two, we expanded on that to see how the different vApp deployment scenarios actually clone to other hosts. Now in part three we want to understand what if anything we can do from a design consideration to deal with these known challenges.

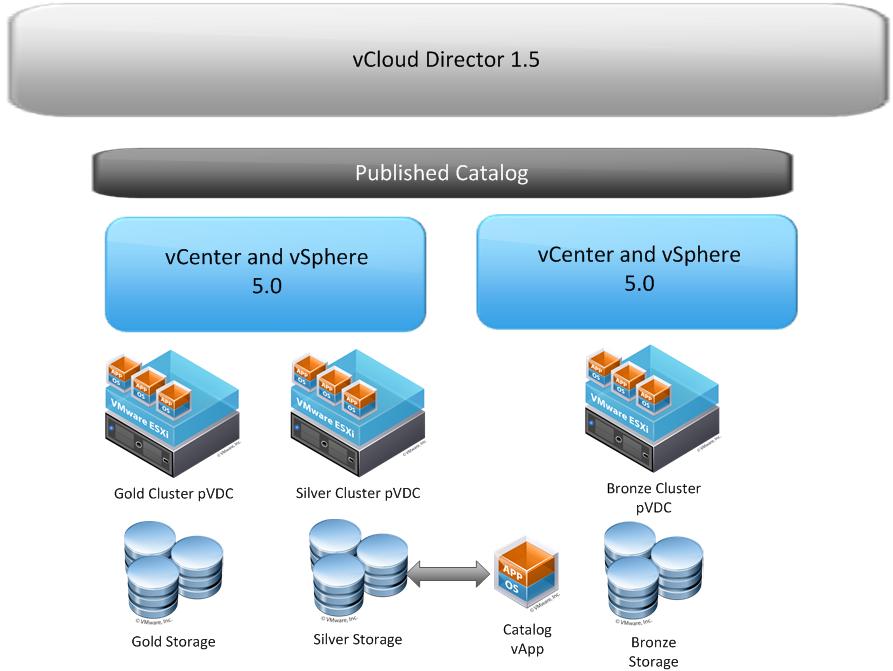

The script located in part one is certainly useful in order to mitigate the balance of the powered off Virtual Machines in a cluster. This can provide a lot of help in the source of the clone process for sure. That being said, what can we do do design a large scale provider hosted catalog infrastructure where there the possibility exists to have multiple vCenters, cluster, and Virtual Datacenters. Let’s take a look at the original layout I used for testing below. The only change is let’s assume there is one Catalog vApp instead of two.

Using a similar diagram as the basis, as well as the idea that a provider may want to maintain a single published catalog, as well as organization’s unpublished catalogs what might we do? We know from the testing we need to be aware of some of these possible design considerations based on the known situations below.

Using a similar diagram as the basis, as well as the idea that a provider may want to maintain a single published catalog, as well as organization’s unpublished catalogs what might we do? We know from the testing we need to be aware of some of these possible design considerations based on the known situations below.

- vCloud Director Cells will proxy traffic on their HTTP port for exports, (Provided you have not configured a management Interface that also has direct access to the ESX hosts in which case the copy may hit there instead)

- ESX hosts will utilize their management interface for network based copy to a Cell or to another ESX host in a different cluster in the same vCenter where storage is not equally presented.

Knowing this here are some initial recommendations you might want to consider, none of which are gospel just some things to think about.

- Consider the network connectivity of the vCD Cells carefully. Possibly put HTTP ports on a separate subnet and use a management interface that is localized to the ESX management network layer 2 to isolate incoming copy traffic from HTTP portal traffic. Essentially keep all “VMware Management” on a separate subnet for traffic flow. A good reference for understanding some of the networking and static routing to make some of the suggestions work is Hany Michael’s post about publishing vCD on the internet. Although it is specific to that scenario, the networking segmentation is good to understand now that we know how the traffic flows. You may even look to have more interfaces on your vCD cell to meet the varying requirements.

- On vCD Cells use separate VMNIC interfaces for HTTP/Console/NFS/Management and enable Jumbo Frames on the Management/NFS ports. The goal is to prevent HTTP portal traffic from getting caught up in the copy processes.

- Enable Jumbo frames on the NFS Storage, switches in between, and on the VMKernel ports on ESX hosts. This may provide some performance improvement on the Management Network copy processes that we have seen.

- Alternatively create separate VMKernel interfaces for vCloud Director communication separate from the Management Interface, (However I have not tested this, but in theory it may also do the same thing.)

- Within at least the initial primary vCenter instance consider a cross-cluster datastore to host the catalog items on. This will at least provide block based copy within that vCenter, but will not help when additional vCenters are added to vCD. You will be left with network copy when deploying a vApp from a catalog across vCenters. As pointed out by a comment on Part One, having a VAAI enabled array in this case would help offload the block based copy process. However, once you need to deploy to any cluster not seeing that Datastore, OR across to another vCenter you are back to network copy and/or vCD Export and OVF import.

One last thought is that you could dedicate a cluster and Provider vDC to just hosting and deploying templates. This is slightly different than just creating an Organization for catalog and templates, I am suggesting an actual provider vDC be used so the hosts are dedicated to these tasks. This would not need to be large as there would not be a lot of load for running Virtual Machines. Maybe this is also a cluster used for Provider only based workload that is not customer centric workloads. This could also then be used by Organizations to host their catalogs, and maybe there is a different cost associated with catalog items versus running Virtual Machines. Of course all customers would then need to be sure to place catalog Virtual Machines in this vDC. This would ensure that all I/O and network load is consumed by dedicated hosts for a good portion of the process.

What I can confidently say is there is no one single best solution to addressing this challenge. What I can offer up and suggest is that most customers I have seen this far are installing vCloud Director in smaller Proof of Concept or lab situations and have not really thought about larger scale deployments where a second vCenter will be in play. I do personally know of t least two customers that have two vCenters planned or currently deployed in their vCloud Director as of today. The purpose of this three part series was to give you some deep dive background on what is going on under the covers. How you chose to address them at the end of the day, but I hope it helps understanding how the parts are actually communicating behind the scenes so you can make better design decisions. Comments and other ideas are certainly welcome if anyone has any other ideas.

Chris Colotti's Blog Thoughts and Theories About…

Chris Colotti's Blog Thoughts and Theories About…

Thanks a lot for the insight on the Export/Import process of the Transfer NFS share. Going to make some changes in the design.

Regards,

Erik

Changes yet again! 🙂 You left VMworld with changes already my friend. Hopefully this one keeps you moving forward with a great design. Let me know if you see anything different or new than what I saw in the lab as you build it out.

fantastic series dude. definitely some great thoughts for design considerations. I also hope developers are reading this so they can take into account ways to improve the vCloud product. I would love to see a 2-5 vNIC Virtual Appliance where we could map a NIC to a port group that takes care of a certain kind of traffic (like how we do in basic ESXi installs today). 1 vNIC for HTTP, 1 vNIC for Remote Console, 1 vNIC dedicated to NFS traffic, perhaps a vNIC to a shared LUN/Datastore for certs & sysprep images that need to be shared between cells, etc. It may burn up a few more IP addresses, but it would create a separation atleast. It doesn’t have to be vNICs, but it could be some sort of logic in a virtual appliance.

This also has a scale out design factor for some potential Vblock customers I talked to who asked if they could use the NFS storage in the AMP as the transfer folder. Again, nice work and experimentation.

I know it has already changed the way a few people are looking at the designs to account for traffic flows. I have already asked about the appliance and if it will support more than two vNICs. No idea yet but I am all over it 🙂

This is really interesting.. We currently run VCD with only 1 interface. The real problems I see is that most of the virtual appliances use E1000. We use CiscoUCS 10GB FCoE and have been updating everything to use VMXNET3. We don’t know how this will work with some of the appliances but right now with just 1 GB interface on VCD I could see some scaling restraints for sure. Kind of like Kendrick stated with remove console and so on.

I am definitely up for recommending something more efficient… We also run a VCD cluster so maybe that helps with the Bandwidth limit. Add to that a VAAI capable array – that helps as well. I also believe that the VCD interface, NFS, and etc. are all on the same vlan…. After reading this I am definitely considering some major changes in our design… man its amazing that now we are really getting deep into this…

Thanks again Chris and all you experts for what you do.

Interesting article, thanks.

This raises the question how long a shadow copy lives when you copy it from Bronze to Bronze. If you deploy a catalog vapp from ovdc1 to ovdc2 which has different storage VCD is creating a shadow VM and places it into the system-rp of the PVDC. But when is this shadow copy deleted? I looked at the Database and saw the VM in vm_container, but it has no lease time. So it stays there forever, consuming disk space, without being potentially used if all related, deployed vapps are manually deleted.

Shadow copies live as long as there is a Fast Provisioned clone leveraging it. So until all the virtual Machines using that shadow are gone the shadow will remain. William Lam has done some research on this as well specifically with the Shadow Copies.

Hi! in these scheme, where you use 1 nic for HTTP, and 1 nic for Management, how would you access the Web Console for administrative purpose from the management network?? the 443 and 80 ports are only binded to the payload interfaces.

Thanks in advance!

Pablo.-

in this case management would be for linux management not vcloud director as that is always done via http.

The problem is that for been able to set the public address for HTTP, Proxy and API, I have to logon first, and I cant do that because the Payload interfaces are in a DMZ where I have no other Server to use to connect. Even for further administration, if i want to upload a ISO or something big, I shall do it through the Web and not locally.

Is there any chance to bind the Console to the managamente nic, too??

Thanks for the quick response!

Pablo.-

In that case just don’t use a management nic it is not a requirement just use one for http and one for console and that’s it. You must manage vcd via the http interface he purpose of a “management” interface is for people that want separate ssh or other direct OS management. In a case with only 2 interfaces then the http interface doubles as the management one.

Hi

If a vcloud multi cell has an 1 interface in the nfs vlan and all my esxi servers in the provider vdc / cluster also have an interface in the nfs vlan , does the vcloud director copy/export/import process occur over the vcd http interface or NFS interface (shortest path) to the esxi servers?

I mean does vcloud director know that it has direct access to the esxi NFS interface instead of going through L3 hop?

The NFS VLAN will most likely be used by both ESXi and vCD to ONLY access the storage and not pass the traffic to and from each other. That will occur between ESXi and the ESXi hosts from the vCD HTTP port and the ESXi Management port hence why you may want those ports adjacent.

The NFS VLAN will be used by both ESXi and vCD to ONLY access the storage and not pass the traffic to and from each other during a copy operation as a direct path for the copy. As stated above the copy will occur between ESXi and the ESXi hosts from the vCD HTTP port and the ESXi Management port hence why you may want those ports adjacent.

The NFS VLAN will be used by both ESXi and vCD to ONLY access the storage and not pass the traffic to and from each other during a copy operation as a direct path for the copy. As stated above the copy will occur between ESXi and the ESXi hosts from the vCD HTTP port and the ESXi Management port hence why you may want those ports adjacent.