Late last year Tintri announced the new EC6000 and T1000 platform to customers. One thing that I have noticed in some of the various support tickets is that many of the root cause issues have actually been upstream networking issues, or simply a lack of networking validation at the time of or ongoing after deployment. Like anything these issues can be easily prevented with a better understanding of how the networking in the new Tintri EC6000 and T1000 series works. I am also going to apply some personal best practices here as well. If you have not figured it out the EC series and the T1000 are based on the same platform chassis, hence the networking is identical.

The Tintri Networks Available

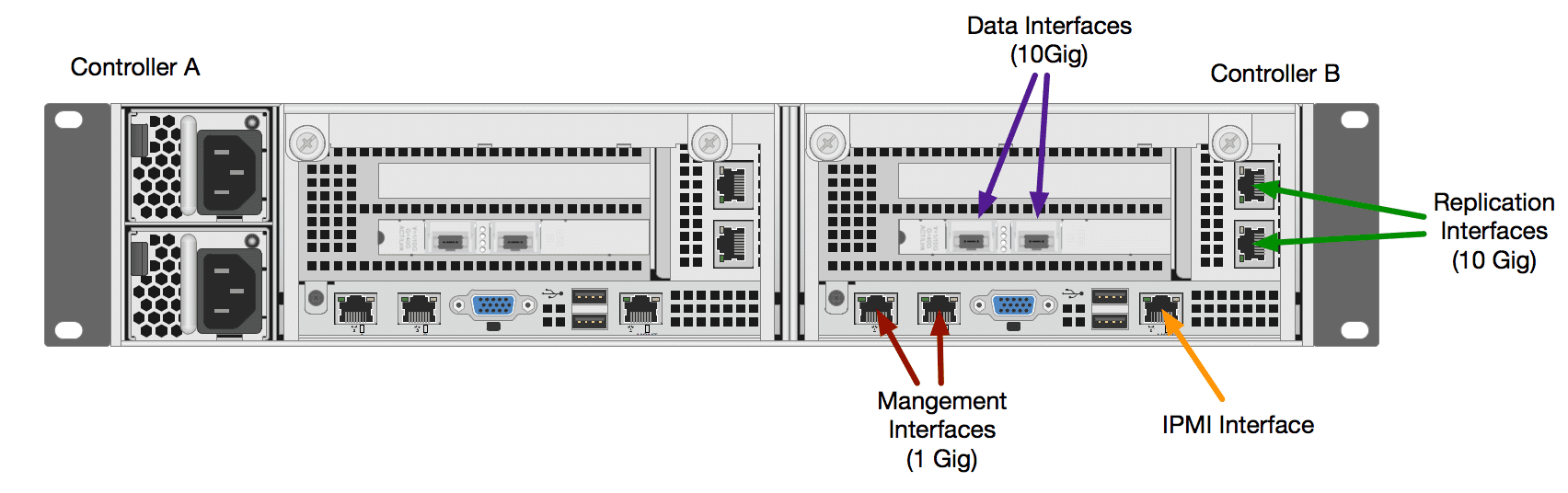

First let’s take a look at the networks that are available on these new platforms. I will cover the most common of them, and the physical connectivity of the PCI cards can vary but the usage of them will remain the same. Below is a back panel diagram illustrating the most commonly used ports. You will note that each controller has a duplicate interface configuration. Other configurations may be done, but this is a pretty common setup.

- IPMI Port: Remote access capability using IMPI command line tools

- Management Interfaces: Used for UI/API access and used by TGC (Tintri Global Center)

- Data Interfaces: Used by hypervisors to mount the NFS storage

- Replication Interfaces: Dedicated for Tintri –>Tintri or Cloud Connector replication

Each interface can be configured using VLAN trunks to provide different VLAN’s on each set of interfaces or they can simply use access ports and each of the four be bound to a single VLAN. This is entirely up to you based on your requirements. Going forward let’s assume these are using access ports for simplicity.

How The Tintri Network Pairs Behave

There are two different ways these port pairs can behavior depending on if you configure them for LACP or not. Out of the box LACP is not enabled but can be in the VMstore settings. For the sake of simplicity let’s assume LACP is not enabled going forward.

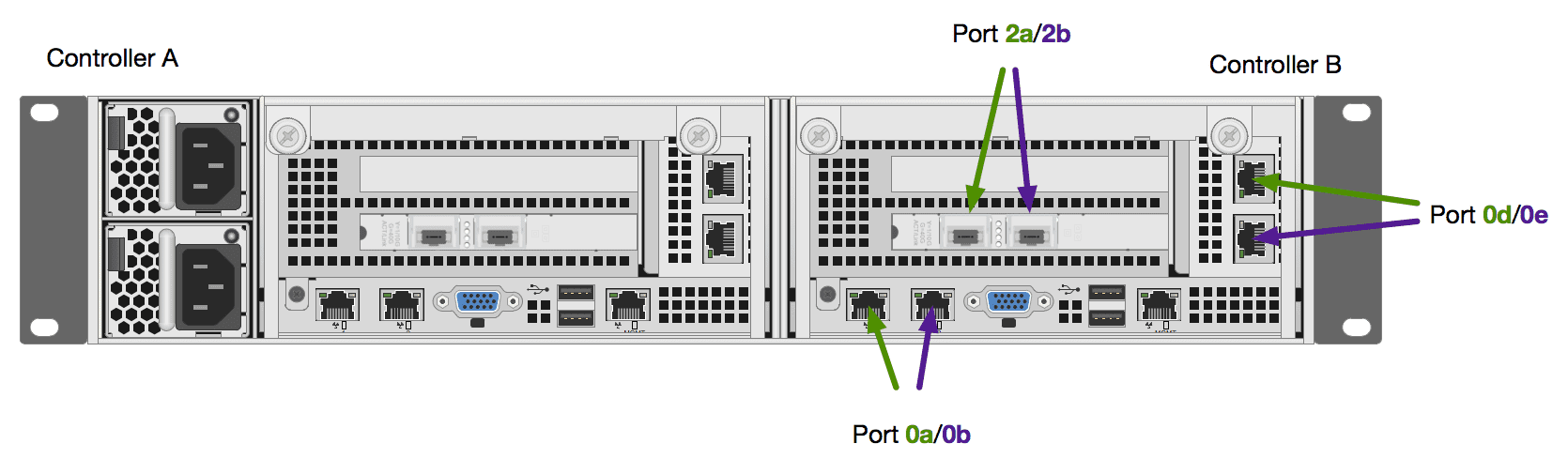

Here we can see that each pair has a Primary/Standby Designation. Generally speaking the “left” or “Top” ports are the Primary connection. The other way to identify this is by the lower letter designation. This is important because the Tintri OS is configured to always use the Primary connection path if available. So in a non LACP configuration this means even if your primary switch fails and comes back the network will move to the standby port and then back to the primary. In an LACP configuration this would not be the case.

It is also important to understand that if these ports are properly configured the system will NOT fail over the controller just based on the loss of a network path to one of these ports. If the controller does failover, the IP addresses are moved over to the other controller and the behavior would be the same. Where this becomes problematic is if the access port VLAN is mis-configured at deployment or later on down the road.

Proper Cabling Of Tintri Network Interfaces

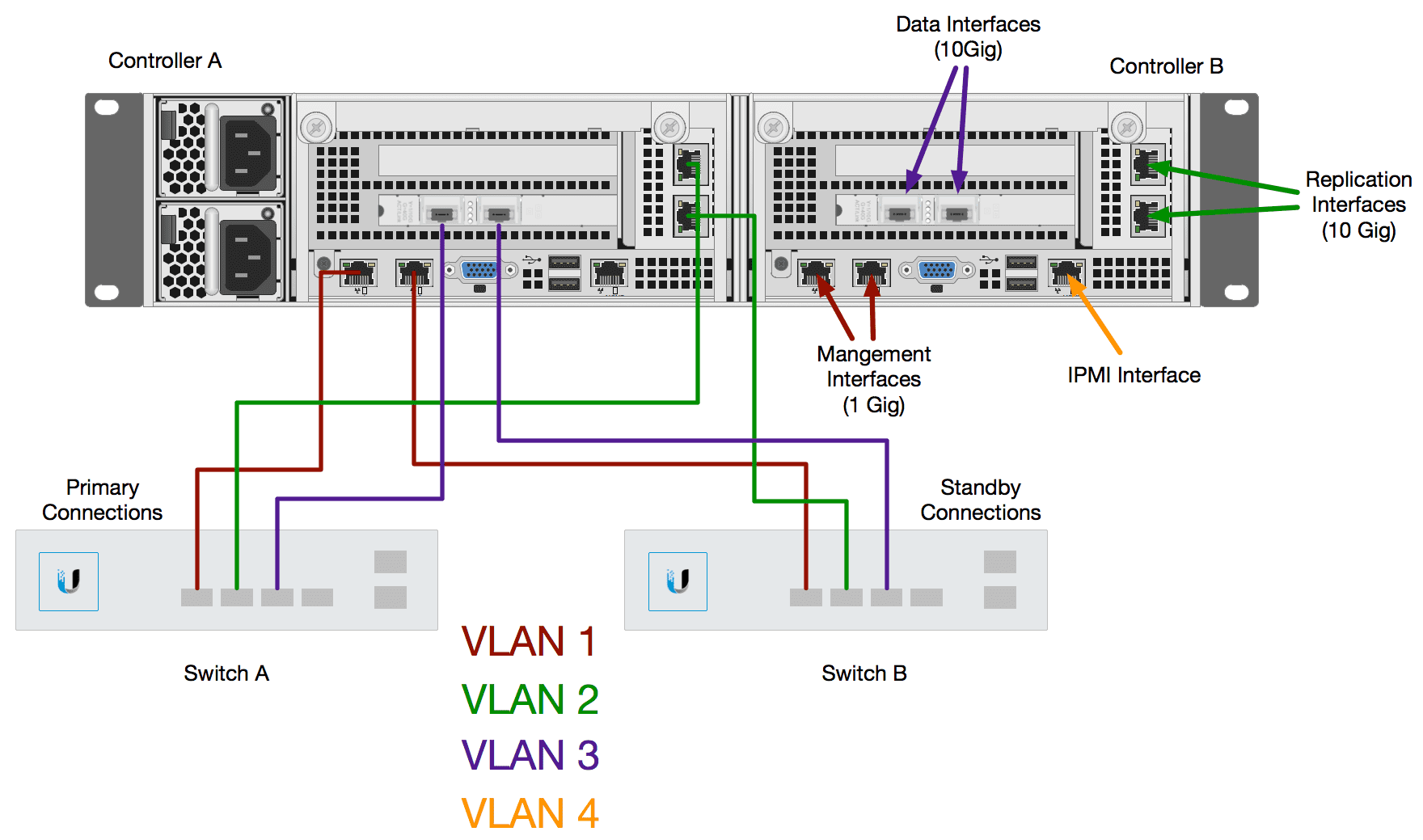

Based on the above information, and again assuming that we are not configuring for LACP, the following diagram shows the proper network connections. Again this is also assuming VLAN access ports are in place for simplicity.

Really, this is very simple. Primary interfaces are connected to Network Switch A, and standby are connected to Network Switch B. These could be top of rack switches or other edge switches. You would connect both controllers the same way since only one controller is ever active at the same time. This configuration would ensure that if a single port failed, or the switch failed all traffic would move to Switch B without a controller failover. During a controller upgrade, connectivity would also remain stable. You could make this more complicated by reversing the connections on Controller B, but that is up to you.

If we did happen to enable LACP we simply need to be sure that the two switches can support cross-switch LACP. In my personal lab this is not available to me I can only do LACP on ports that exist on the same switch. In that case I could cable Controller A to Switch A and all of Controller B to switch B. However that would require a controller failover just for a switch failure. Again, depending on your switches, and your requirements that may be something you want/have to do.

Testing Of Tintri Network Interfaces

Long ago I wrote about the importance of a test plan on vSphere hosts, especially those with many VLAN port groups. Tintri has long said that their design mimics that of vSphere hosts, so testing the networks really is no different. Assuming we stick with the basic configuration above the test plan is fairly simple and can be done with basic ICMP Ping tests.

- Continuous Ping admin ports

- Pull primary port cable (Observe failover, a few dropped pings)

- Re-connect Primary (Observe failback, a few dropped pings

- Controller should NOT failover unless both cables are removed

- Repeat above for replication ports

- Repeat above for data ports

- Manually failover controller and repeat all three tests

- You can also fail an entire switch or down the ports to simulate

- Finally test an automated controller failover by removing 2 of the same ports which simulates an internal card failure

In theory these tests can and should be performed regularly as changes do happen on the network. If you ensure all this, you will have no issues during controller upgrades that involve the failover of the controllers to perform the upgrade. You should also have no connectivity issues from inter port/card failures. The best defense is always a good offense. Hopefully this will help customers and partners as they deploy the new EC6000 and T1000 platform.

Chris Colotti's Blog Thoughts and Theories About…

Chris Colotti's Blog Thoughts and Theories About…

Do you have documentation for configuring IPMI port on Tintri 7060 series

I’m sorry I don’t