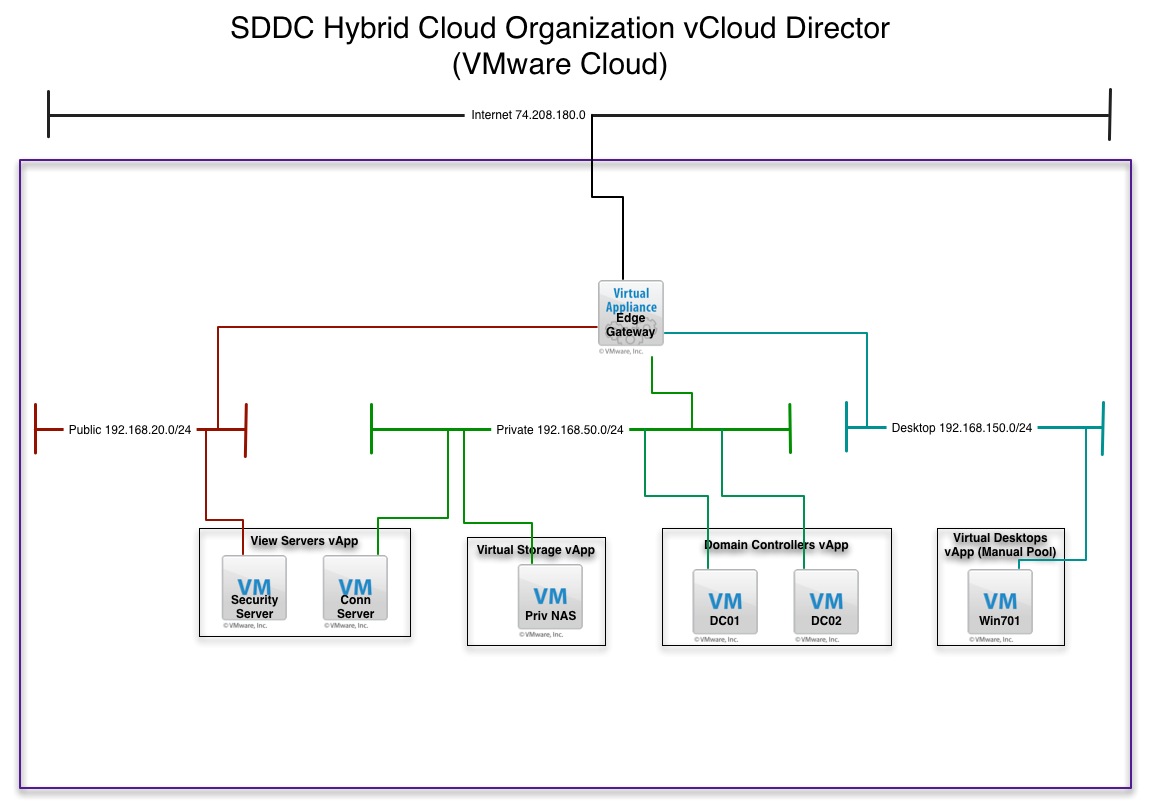

I’m actually surprised in the last few posts nobody has picked up on the fact I am showing some VMware View servers along with a View PCoIP desktop in my diagrams. The fact of the matter is this is of course un-supported, but I wanted to see if it could be done. The reasoning was pretty simple. I wanted PCoIP remote access to my public vCloud Director Hybrid cloud setup without using the VMware Remote Console. I wanted something less bulky that I could use on any device. However, this does come at an interesting price so here is what I discovered. Below is a simplified view of the earlier diagrams just showing the VMware View Components. Thanks to Kris Boyd for helping out on this one.

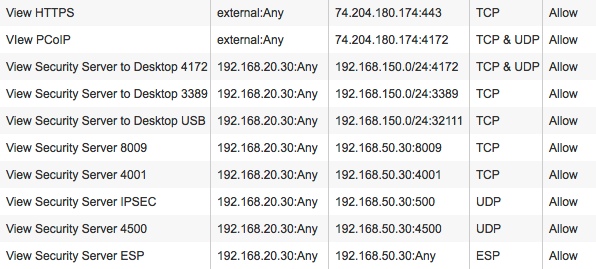

vCloud Director Edge Firewall Rules

Like anything else in this little experiment, nothing is left wide open from a firewall perspective between the security server, connection server, and the desktops. I did decide to put all desktops on one network for ease of writing the firewall rules. Below is the output of the vShield Edge Gateway firewall rules just for the View Security Server which is obviously configured for secure tunneling.

You can see there is a couple of rules for external access to the View Server through the external NAT address. There is three rules for the Security Server to access the desktops on certain ports, and finally there is the rules for Security Server to Connection Server. I will write another post on the ESP protocol tomorrow as this is a little bit of an undocumented feature of the Edge Gateway. There is also a rule not show that is an allow any FROM the desktop subnet to the Public Subnet and the Private Subnet since traffic in that direction may be trusted.

View Manual Pool

Obviously in a public hosted cloud there is no vCenter access so the desktops I created are on the domain and not controlled by vCenter. I installed the VMware View Agent using the unmanaged command in the templates:

VMware-viewagent-XXXXXX.exe /V”VDM_VC_MANAGED_Agent=0"

By doing this the desktops are unmanaged and can be added to a Manual Pool for use by VMware View. Of course if you are a Virtual Private Cloud customer with your own vCenters you can host the connection and Security Servers in vCloud Director and keep the desktops in a dedicated vCenter outside vCloud Director. However, for me the manual pool option is fast and works just fine to get me the remote access I need to my back end public cloud IaaS.

Limitations of View PCoIP Inside vCloud Director

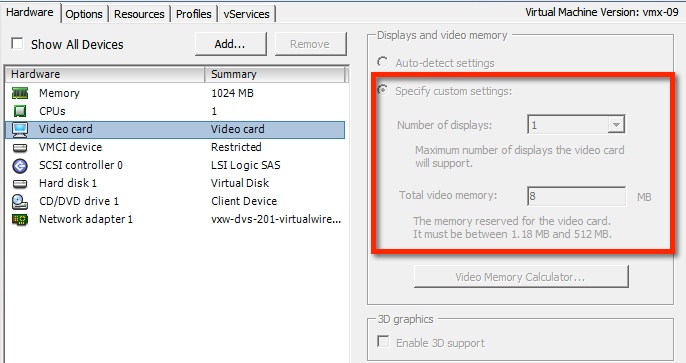

Now although this all works pretty well and is a standard kind of setup, something I discovered that makes this not so practical yet is that there is a limitation on the Video RAM that vCloud Director allows on Windows virtual machines. In testing I always got the dreaded “Black Screen” and I was troubleshooting for a day or so. Finally I stood this up in a lab and did some digging. I examined the virtual machines settings and noticed on any Windows machine controlled by vCloud Director, the Video RAM is set to 8MB. This is much too long for most PCoIP larger resolutions to work. Although Remote Desktop seems to have no issues PCoIP cannot go much above 1200 pixels before you lose the screen.

At this time there is no way to adjust the Video RAM setting through vCloud Director’s UI or API’s so if you do this in lab understand you should be good on mobile devices, but on a laptop you will need to shrink the window. It goes without saying I did try to manually change the Video RAM, but with anything vCloud Director controlled the settings revert back to the coded ones on the vApp. I am trying to dig further to see if this can be changed someone on the backend. This will happen consistently if you try to build the virtual machine from within vCloud Director. So there is better answer to this.

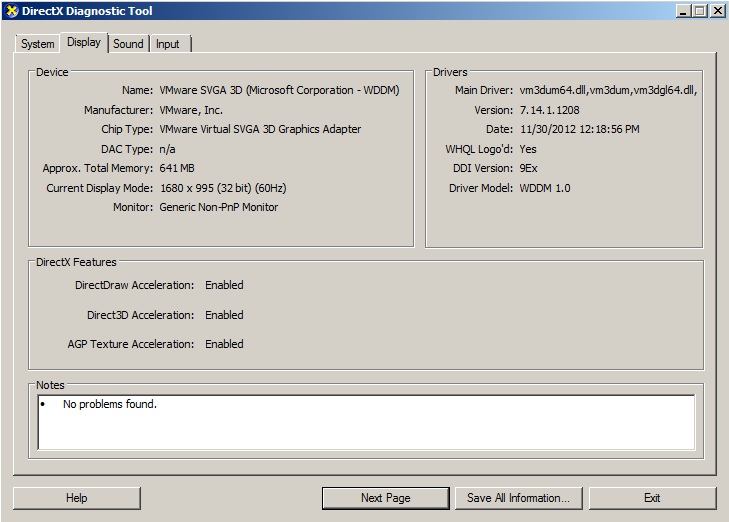

Per Hugo’s comments below the best way to create a Desktop image for vCloud Director, is to build it in vCenter first. Then you can adjust the Video RAM settings to something higher and they will remain. I created a new template in a remote vCenter Server, set the Video RAM to 512MB, enabled 3D, and then exported it as an OVF and imported into vCloud Director. To make things faster you COULD just create the VM Shell as you want, and export that into vCloud Director then build from ISO. The trick here is to make sure you pre-configure the virtual machine and export to an OVF and import into vCloud Director. Below you can see the DXDIAG output showing the increased memory and full screen now works perfectly!

Chris Colotti's Blog Thoughts and Theories About…

Chris Colotti's Blog Thoughts and Theories About…

Hi,

We have been testing htis inside a vDC for a while. In order to get the Video Settings correctly you need to change the Source VM that you import Video Settings. Set them and when you import the VM it is remained.

Also look at making this a catalog item with a post task to add it to the domain and to run a script to add it to the VDI server.

Would be nice if VDI would be able to add a vDC as a target instead of vCenter.

Hugo Strydom.

Hugo I tried changing the source VM before import and vCD always sets it to 8MB no matter what it was set at. I can try again but the source VM was imported as both smaller and larger with no luck it remained the same. Also in the public side I don’t have control over the vCenter so it makes it more difficult. I will test again but on import and power on it was always 8MB showing after vCD takes control.

I think you are right my friend. I don;t think I tried changing the Base VM before the import, I was trying only to adjust it after. Still, would be nice if that was exposed 🙂

Awesome post…this is EXACTLY what I’ve been looking for, as I want to setup the same scenario. It’s interesting about the video limitation inside of vCD and I appreciated the exchange between you and Hugo. Very well done.

Thanks, glad we worked it out and yes I am using it every day and it’s great now 🙂