I have long since said that I am almost never the smartest person in the room. However, when it comes to new technology I do believe I learn what I need to quickly and efficiently so that I can at least understand the concepts of any new technology. In the last two weeks I have done just that and completely transformed my vCloud in a Box installation to a fully working, multi-hypervisor, Nicira NVP lab. I wanted to take a few moments to share some of what I have learned about the fundamentals of this new concept in Virtualized Networking from my point of view. Simply put, Nicira virtualizes the network in much the same way VMware virtualizes the servers. This is not meant to be anything more than some very high level points I have learned in the last few weeks. As I get more into the weeds I may add some depth to this. I am also thinking about documenting my lab build so folks can see just what I stood up on a very over committed single ESXi 5.1 host.

Logical vs Physical

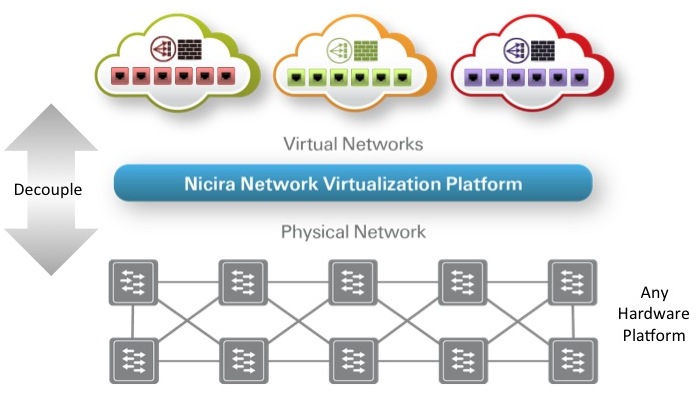

This sounds very familiar does it not? The concept is that we are decoupling the logical networking from the physical networking. Much the same way server virtualization de-coupled the operating systems and software from the physical server hardware. What I have been making myself think about is the logical layers more than the physical layers. In fact when you look at the Nicira NVP management UI, things are refered to as Logical Switches, and Logical Switch Ports. The fundamental difference is that this logical space itself if not quite a virtual switch. That exists at the hypervisor level itself and is where we can programmatically control things. The logical space is what is created when all the Transport Nodes are connected to the fabric, and how you can get the cross-hypervisor connectivity between virtual machines through the logical switches and ports.

What is a Transport Node?

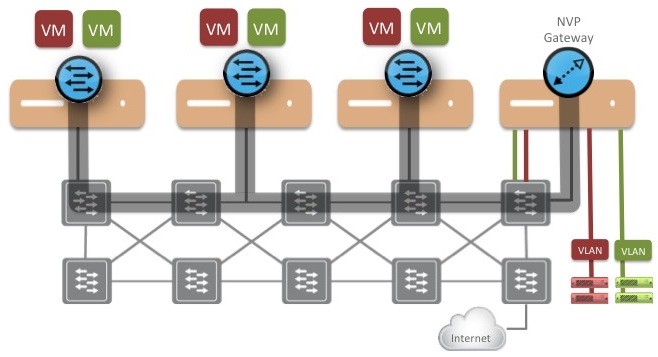

This took me a little time to grasp but a Nicira NVP transport node is really anything that is running Open vSwitch. Each transport node connects to every other transport node in the fabric via the use of various tunnelling protocols available. When logical ports are made active, tunnels will be established for any and all available connections between transport nodes for communication. Thus tunneling aspect is where the encapsulation really happens between transport nodes. Examples of the various Nicira NVP transport nodes are:

- Hypervisors running Open vSwitch (XEN, KVM, ESX)

- Note: ESXi is not an integrated OVS like XEN and KVM at this time. It uses a Virtual Machine Appliance running on the ESXi hosts to work. I may do a separate post on this just to explain the architecture as it is now.

- NVP Gateway Nodes (L2 or L3)

- NVP Service Nodes

Bridging from Logical to Physical

This is something that took me a while to get working, but it was mostly due to the fact my lab is nested beyond belief. However, the concept is simple. We need a way to get out of the logical switch space where virtual machines are communicating out to the physical space where other devices may be. The answer is the Nicira NVP Layer 2 gateway service. Once I got this up and running it was pretty sweet. It provides a very efficient service to the logical space to allow virtual machines on the same Layer 2 IP Subnet to connect back out on their respective VLAN’s on the physical side. Conceptually it makes sense, and it finally made even more sense once I got it configured. Think of it as similar to doing vCloud Director Direct Connect Networks, but without the coupling to the Physical Network like the Direct Connect option does. You get the direct Layer 2 connectivity by still de-coupling from the physical hardware. You still always have the option of doing this with the NVP the L3 Gateway Service, but if you don’t want to deal with NAT routing and firewall rules the L2 Gateway Service has cool written all over it.

The Importance of a CMS (Cloud Management System)

This is something that I learned very quickly. The CMS is the key to making all this work. If you do not have some level of CMS already up with the capability of connecting to the Nicira NVP REST API’s to create logical switches and Logical Switch ports, it’s just a lot of manual labor. In my home lab of course I did not take the time to set up CloudStack or OpenStack since that alone is another learning curve, but you need something in place before you venture into this space. I just know it’s the key to orchestrating the on demand creation of all the virtualized networks. I do not plan on even being close to an expert on all the available CMS options out there that’s for sure.

So Much More to Learn!

What I can say is this is the real basics of what I have picked up in the last couple weeks. The fact I got this all up and running in my home lab and learned how to set up and install KVM and XEN not only on their own, but along with the Open vSwitch and Nicira NVP Components was a pretty cool goal. Now it is about helping qualified customers see how they can leverage this new technology. Bear in mind this is not for everyone out of the gate we have a pretty tight qualification process. It has very specific use cases right now as ongoing integration work continues especially for “Pure” VMware customers. I am also looking into other interesting processes like how one would move workloads from traditional vSwitches to the new Nicira NVP model, but even that does not seen so difficult provided things like Gateway Services are in place ahead of time. All in all it has been a cool first couple of weeks banging on this stuff in my lab and drinking from the fire hose. What I can tell you is some very cool technology I am getting to pay with.

Chris Colotti's Blog Thoughts and Theories About…

Chris Colotti's Blog Thoughts and Theories About…