As many people know I have been in Barcelona for the week for VMworld 2012. In the last couple days I have spent some great time having conversations with Thomas Kraus and Kamau Wanguhu who are also on my team. I also have had the pleasure to interact with some of the Nicira folks like Steve Tegler and Martin Cassado himself. The point here I started thinking about my past roles as a system admin with vSphere and trying to really drive home some points in my own mind about where and how Nicira NVP could change a lot of thinking. With that said, I wanted to run down what was one typical process for me not too long ago when it came to adding or dealing with Virtual Networks and VLANs in vSphere. Trust me though after this week I have a lot of ideas coming.

For most of us that were, or are currently vSphere administrators, one big problem we all have encountered is knowing if all the needed VLANs are properly trunked to the Hypervisors for proper use. When we build a new cluster or add hypervisors to an existing cluster new physical ports need to be provisioned for us. This has the following ramifications:

- The Network team needs to go through proper change management which takes time

- The Network team needs to add the new VLANs to the existing trunk ports

- The Network Team needs to add all the VLANs to the trunks on the new physical ports for the new hypervisors

- The vSphere team needs to validate that everything is working properly and if not work with the network team to trouble shoot

The last bullet is probably the most time-consuming for the Hypervisor teams and here is the basic process I used to go through myself to validate all things were working.

- Create a new Virtual Machine and attached to the first VLAN

- Begin constant ping test

- vMotion that Virtual Machine to every host in the cluster

- Change the Port Group to the next VLAN

- Begin constant ping test

- vMotion that Virtual Machine to every host in the cluster

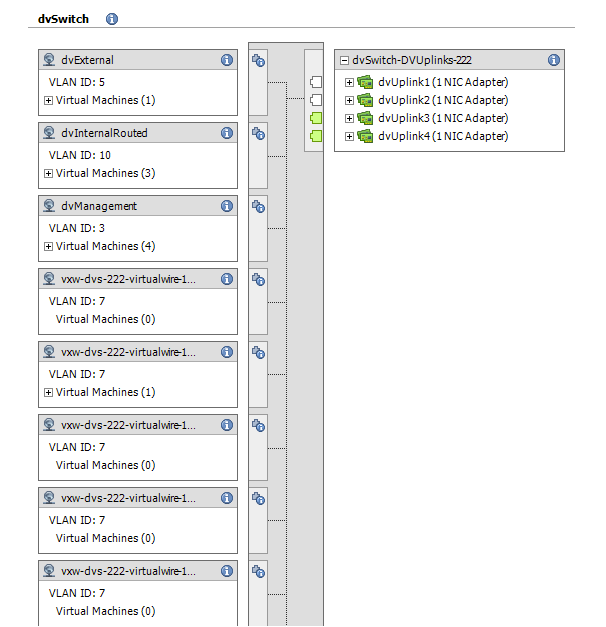

Now imagine this new cluster had 20 or more VLANs attached to it from the start. This process takes a lot of cycles to “rinse and repeat” just to make sure that all the networking connectivity is working properly across all Hypervisors. Only then would we put the cluster, newly added host, or VLAN into production. Does this process at all sound familiar to anyone? I hope so, if not we all know what happens if you don’t validate at this level and DRS moves a Virtual Machine to the host without the proper trunks. The image below is a typical network view of vSphere and the port groups needed for various VLAN connectivity. Keep this in mind as we go forward.

The Solution:

Well at this point you should already know that I am going to say Network Virtualization solves many use cases, but this one is a particular pain point for the vSphere Administrators. Network virtualization with overlay technologies like Nicira can drastically reduce this operational overhead. I have been so excited about the technology in the last couple weeks that this kind of use case was escaping me. However, think about that picture above and how it changes. With Nicira NVP in particular based on my previous article all those same Virtual Machines now simply connect to the SAME vSphere port group.

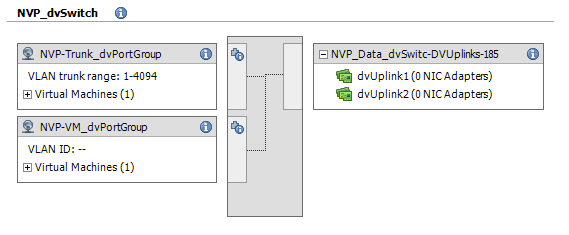

The inherent thing that Nicira NVP brings to the table is network simplicity at the physical layer. All those VLAN port groups and trunk configurations are no longer needed except in the case of maybe the NVP Layer 2 Gateway which I may post about later. All other virtual machine traffic is processed in the overlay tunnel and thus requires simplified connectivity in the Hypervisor. Below is essentially what your vSphere networking view would look like after. Instead of all those VLAN port groups you essentially end up with one.

So what I am saying here is that the original problem stated above that we have all gone through or are continuing to go through becomes much easier to manage thus enabling cloud. That is just one example of something that was somewhat of an “Ah-Ha” moment for me so I figured I would share it. Please comment if you see this as a problem you are also currently or have had to deal with in the past. I am gathering data on this and other use cases for some of our team’s testing to try some interesting things.

Chris Colotti's Blog Thoughts and Theories About…

Chris Colotti's Blog Thoughts and Theories About…