So this weekend I set out to document and understand the new aspects of vCloud Connector 1.5 and how some of the components go together. It seemed like there might be some interest in a how to article explaining the process not only of putting the pieces together, but also how to actually do some of the moving of workloads. So I set out on a mission to see if I can explain some of this in detail. The first thing anyone will need to do in order to truly try out vCloud Connector is to get some public cloud space on a provider that is using vCloud Director. My choice was Virtacore for a number of reasons, but they were open to letting me try out their beta portal and provide some initial feedback. If you do happen to sign up with them you can actually get $50 off of your initial public cloud service by using the code STEKREF when you sign up, so not a bad deal if you have not tried out a vCloud Express provider yet.

vCloud Connector 1.5 Architecture

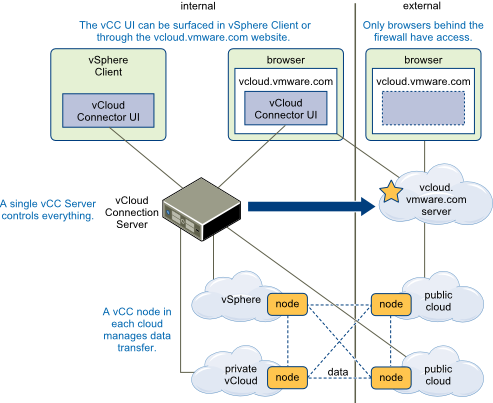

First I think it is important to understand the new architecture of vCloud Connector 1.5 as it differs greatly from 1.0. Many who have played with both will see the differences out of the gate, but I wanted to also tie this to how I deployed the various components for testing. Refer to the figure below which was taken from the vCloud Connector user guide.

vCloud Connector Server – The server component is the control and management point for vCloud Connector. You really only need one server as long as it can connect to the various nodes. In my case the server was hosted in my lab, on the management cluster for vCloud Director. I did not host it inside my vCloud as a vapp simply because I did not see the need to. I decided to treat it like any other management server workload supporting the vCloud Eco-System

vCloud Connector Node – The “nodes” are the 1:1 connection points managed by the server. The 1:1 aspect is that you actually need a node per cloud, Organization, or vSphere instance you want to move workloads between. So in my case I needed two nodes on premise and two nodes hosted at Virtacore. These remote nodes were of course hosted in the cloud. I needed two of them because their public cloud is made up of two datacenters, each with their own vCloud installation and thus two different API’s. The nodes are also where the various exports happen during the process of the move and where you may need to increase the disk sizes, or mount them to NFS if you can. In my case I connected all the nodes to either the SYSTEM level or the top-level of vSphere for testing. In a hosted public cloud, you could need more nodes depending on the number or organizations you have as well as the number of datacenters.

vCloud.VMware.com – This is the remote web portal you can use to manage your various vCloud Connector servers in a single pane of glass. There is some requirements to get connected to this which we will talk about in a little bit. UPDATED 12/7/11: I wanted to point out a couple things about the portal. Although the portal is internet based, it will direct your browser to the local address of the vCloud Connecter Server. This means you are not able to manage the vCloud Connector server unless you also have VPN or on premise access to it. The component could be hosted externally with an external IP, but as of today it also will not work behind NAT. Some folks have discovered this and there is a KB article about it. Just take note that even though you are accessing a public portal it will be sending your browser to the local IP addresses. This also means that a firewall rule for for “External” access is not needed until the NAT issue is worked out. Because of this reason you will not be able to make console connections to some Virtual Machines if the vCloud Director Console IP is not exposed and properly directed or load balanced.

Security – It is important to understand the security of these components. The Server and Nodes communicate over SSL using port 8443 regardless of where the nodes are located. So it stands to reason you will want to generate some real certificates at least for your vCloud Connector server since that will be connected to be the remote nodes as well as the portal. The local nodes may not be as big a concern since they are on the same network. However you can see below, that if you are transferring a workload from a private cloud to the public the two nodes will interface and then you have an argument for some real certificates on all nodes as well. Generally in a production deployment I would get all real certificates, but in my lab I decided not to just for the sake of testing.

What I ended up with was the following servers on my network once I decided on the deployment scenarios

- vCloud Connector Server – on the same local subnet as the other management virtual machines

- vCloud Connector Node – on the same subnet as other management components for vCloud Director Connection

- vCloud Connector Node – on the same subnet as other management components for vSphere Connection

- vCloud Connector Node – Remote on Virtacore in the Virginia Datacenter

- vCloud Connector Node – Remote on Virtacore in the Los Angeles Datacenter

Installing the Various Components

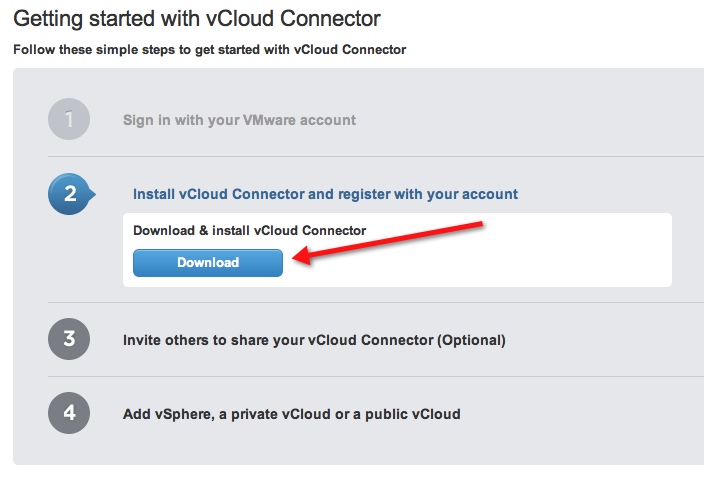

The first step you want to do is visit vcloud.vmware.com and register a username on the portal. Bear in mind that this is the first and primary username that will be used to connect the server to the portal. You can invite others to use the portal, but I would not suggest making the initial login your personal email. You may want to make it something more generic to the company like you may currently do for the support or licensing portal. In fact, if you already have a login that is a single company login you may be able to already use that since it is a valid vmware.com account.

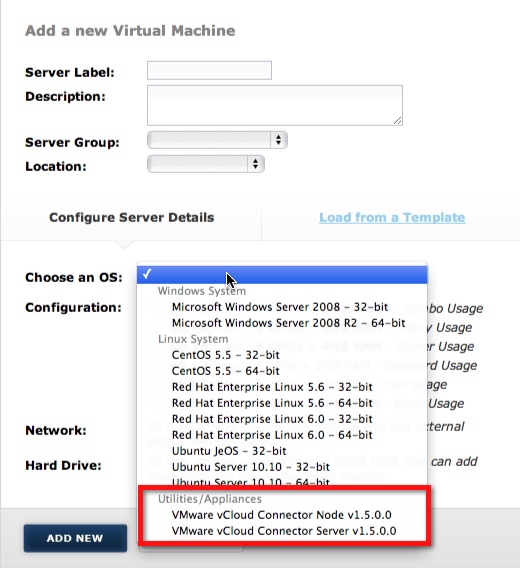

Next you want to set up a public cloud testing account at Virtacore or other provider. Virtacore has already put the server and node components in their catalog for users to deploy into their host public cloud saving you the setup time on that side.

Once you are logged in the first thing you can do is download the appliances from the portal since that is the only option available.

Once you have the OVF files you can import as many of them as you need. As mentioned above one server should suffice but if you have multiple organizations in your cloud, depending on the URL you will use to configure them, the node will be “Pinned” to that organization. For my tests, again I simply used a system level admin as we will see in the configuration section.

Once you have the OVF files you can import as many of them as you need. As mentioned above one server should suffice but if you have multiple organizations in your cloud, depending on the URL you will use to configure them, the node will be “Pinned” to that organization. For my tests, again I simply used a system level admin as we will see in the configuration section.

On the Virtacore side simply deploy a new node from the catalog using the portal as shown below. You will need to deploy one in LA and one in VA if you want to have access to each datacenter. Having these utility machines on the service provider side does save a lot of time and effort from having to upload them to my own catalog for sure.

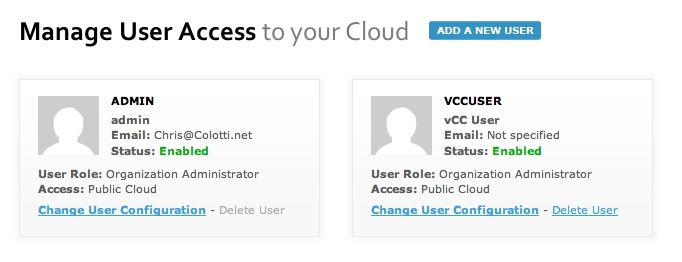

You will also want to add some users to your hosted Virtacore cloud. The initial login they provide you cannot be used to connect using the nodes, and you will need to add other Organization Administrators to perform most of these functions.

You will use these credentials later, but I found out this weekend you cannot use the original login since that is tied to their billing system as well. Simply go to administration and add a new user to assign them as an Organization Administrator

Chris Colotti's Blog Thoughts and Theories About…

Chris Colotti's Blog Thoughts and Theories About…

Great post Chris. I started messing with vCC two weeks ago and got stuck trying to deploy the server and nodes inside the vCloud instance and not on the vCenter server directly. I heard that this setup process will be cleaned up in the next release to make getting this setup much easier. The whole notion of nodes and servers can be complicating. It would be nice if it was something as simple as the vCenter Mobile Appliance where I can deploy that OVF, open up the http page, then register local and remote vCloud IP/DNS addresses against it and get going.

Once I decided not to run them in the vcloud itself it seemed to make much more sense to me. Since the nodes are interacting with vCD I figured why should they be INSIDE vCD when they are hitting the API url on the outside.

Hence in an on premise setup I would just put all the nodes in the management cluster on the outside of vCD pointing to the vCD API’s. Of course in the hosted side you always need to run them inside the cloud, but there, that makes sense to me. 🙂 I may draw up a basic network diagram showing it, but it really does look like the first drawing in the post. I may do more of a network based logical view so it makes total sense how they are deployed in the lab.